After bringing Data Privacy Day to campus seventeen years ago, Duke faculty Jolynn Dellinger and David Hoffman co-moderated this year’s event at the Law School on January 28. Seated between them were attorneys Joshua Stein and Carol Villegas, partners at law firm Boies Schiller Flexne LPP and Labaton Keller Sucharow, respectively. Both are in the midst of multiple lawsuits against corporate giants; Boies Schiller joined a lawsuit against Meta last year, while Labaton is currently involved in data privacy-related suits against Meta, Flo Health and Amazon, and Google.

Villegas began by emphasizing the importance of legal action on these issues in light of inadequate legislation. She pointed to the confusion of senators at Mark Zuckerberg’s testimony during the Cambridge Analytica scandal, in which the data of over 50 million Facebook users was misused for political purposes. “They don’t understand it…You can’t expect a legislature like that to make any kind of laws [on data privacy], not to mention technology is just moving way too fast,” Villegas said.

Facebook and most social media platforms generate revenue through advertisements. While many people are aware that these sites track their activity to better target users with ads, they may not know that these companies can collect data from outside of social apps. So, what does that look like?

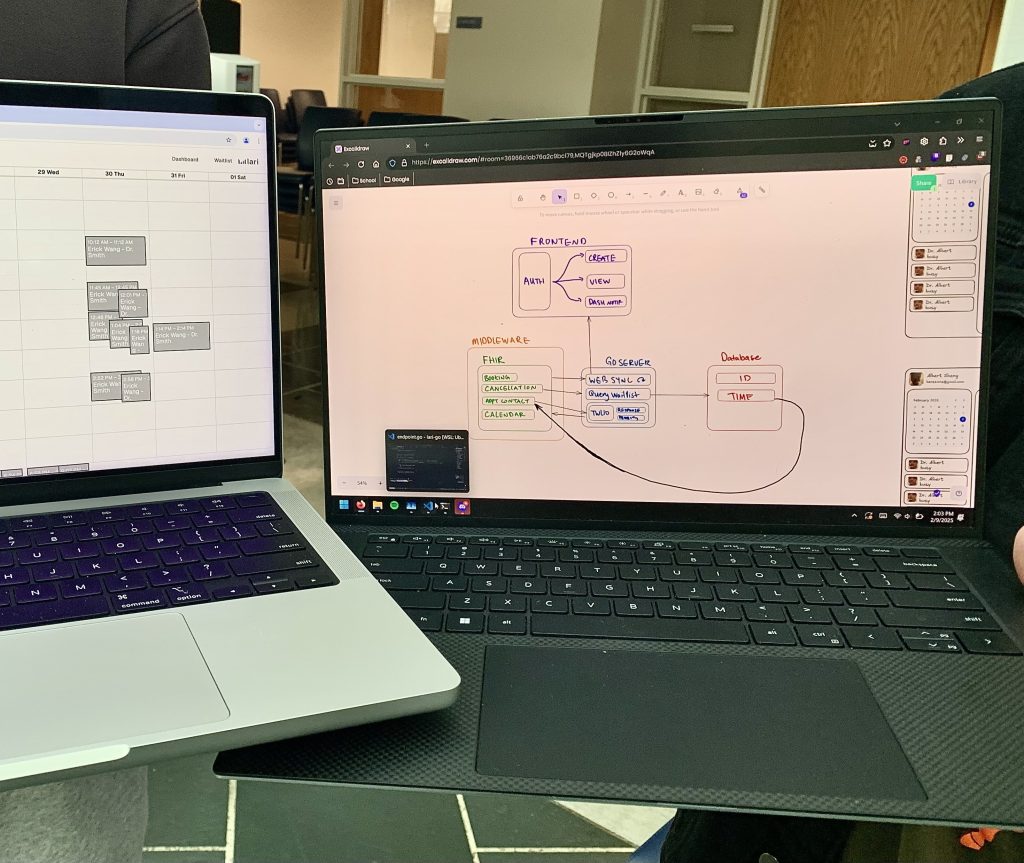

Almost all apps are built using Software Development Kits (SDKs), which not only make it easier for developers to create apps but also track analytics. Tracking pixels function similarly for building websites. These kits and pixels are often provided for free by companies like Google and Meta–and it’s not too difficult to guess why this might be an issue. “An SDK is almost like an information highway,” Villegas said. “They’re getting all of the data that you’re putting into an app. So every time you press a button in an app, you know you answer a survey in an app, buy something in an app, all of that information is making its way to Meta and Google to be used in their algorithm.”

So, there’s more at stake than just your data being tracked on Instagram; tracking pixels are often used by hospitals, raising the concern of sensitive health data being shared with third parties. The popular women’s health app Flo helps users track their fertility and menstrual cycle–information it promised to keep private. Yet in Frasco v. Flo Health, Labaton alleged it broke confidentiality and violated the Confidentiality of Medical Information Act (CMIA), illegally transmitting data via Software Development Kits to companies like Google and Meta. Flo Health ended up settling out of court with the Federal Trade Commission (FTC) without admitting wrongdoing, though Google failed to escape the case, which remains ongoing.

It’s not only lawyers who are instrumental to this process. In cases like the ones that Stein and Villegas work on, academics and researchers can play key roles as expert witnesses. From psychiatrists to computer scientists, these experts explain the technical aspects and provide scientific basis to the judge and jury. Getting a great expert is costly and a significant challenge in itself–ideally, they’d be well-regarded in their field, have very specialized knowledge, and have some understanding of court proceedings. “There are really important ways your experts will get attacked for their credibility, for their analysis, for their conclusions, and their qualifications even,” said Stein, referring to Daubert challenges, which can result in expert testimony being excluded from trial.

The task of finding experts becomes even more daunting when going up against companies as colossal and profitable as Meta. “One issue that’s come up in AI cases, is finding an expert in AI that isn’t being paid by one of these large technology companies or have… grants or funding from one of these companies. And I got turned down by a lot of experts because of that issue,” Stein said.

Ultimately, some users don’t care that much if their data is being shared, making it more difficult to address privacy and hold corporations accountable. The aforementioned cases filed by Labaton are class action lawsuits, meaning that a smaller group represents a much larger group of individuals–for example, all users of a certain app within a given timeframe. Yes, it may seem pointless to push for data privacy when even the best outcomes in these cases only entitle individuals to small sums of money, often no more than $30. However, these cases have an arguably more important consequence: when successful, they force companies to change their behavior, even if only in small changes to their services.

By Crystal Han, Class of 2028